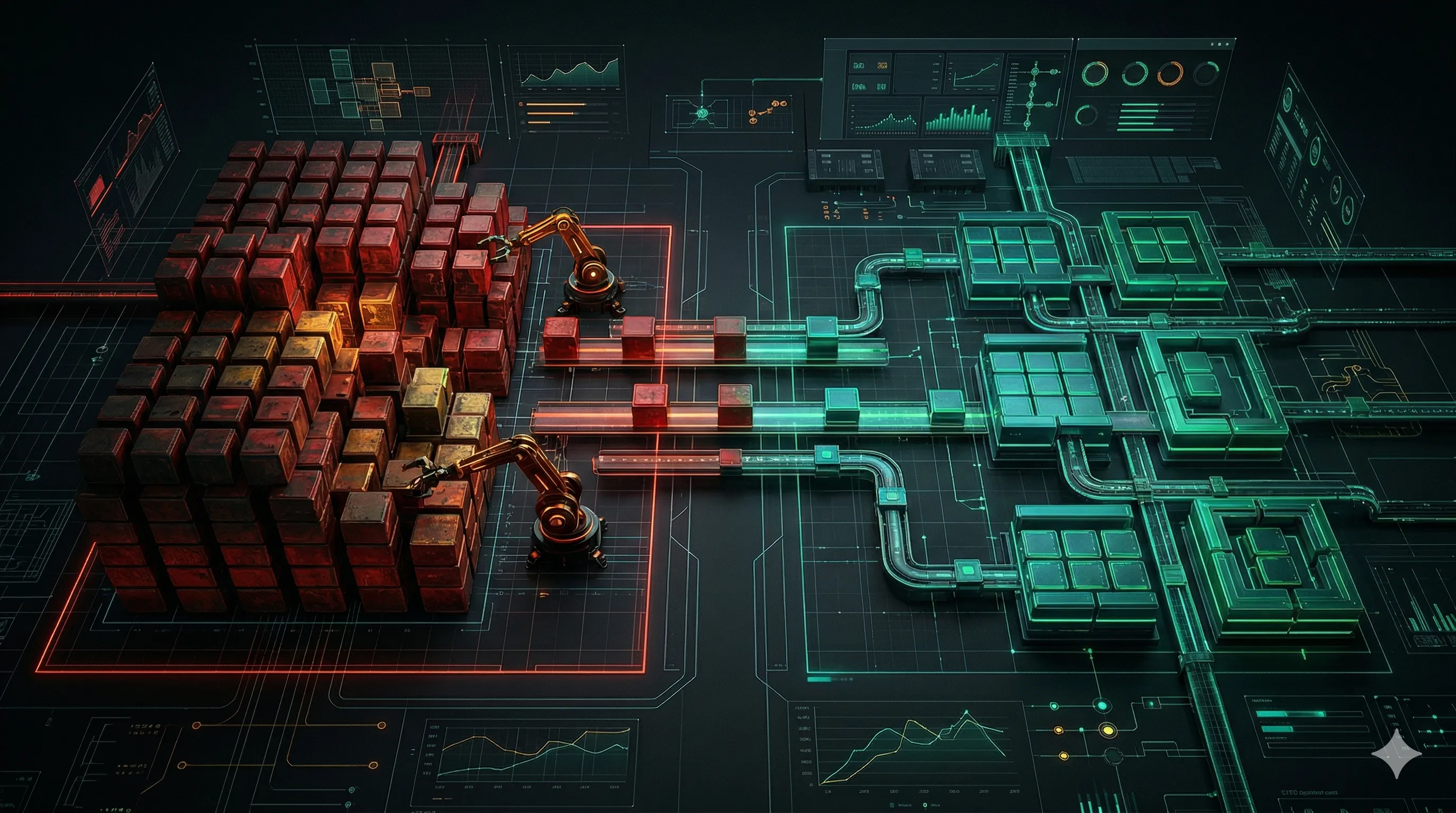

Enterprise teams rarely fail a Selenium to Playwright migration because Playwright is weak. They fail because they treat the migration as a rewrite instead of an operating model change.

That framing mistake is expensive. A real migration changes four systems at once: the test runtime, the CI/CD feedback loop, the ownership model between QA and engineering, and the way AI can safely accelerate daily work. If we treat all of that like a simple framework swap, we create a beautiful repository and a broken delivery pipeline.

If you already read From Selenium to Playwright: A Data-Driven Look at the Shifting Landscape of Test Automation, think of this article as the operational sequel. The first question is whether the industry is moving. That answer is already clear. The second question is harder: how do we migrate an enterprise suite without blowing a hole in release confidence?

My answer is the Tetris Doctrine.

The Illusion of Stability in the Legacy Swamp

Many organizations describe a legacy Selenium stack like this:

“It is slow, brittle, and nobody enjoys touching it, but at least it is stable.”

Usually, that is not stability. It is tolerated fragility.

The pattern shows up the same way across enterprise environments:

- The CI Bottleneck: Feedback arrives too late to shape developer behavior.

- The QA Silo: Test code lives in a separate language, style, and ownership model from the product code.

- The Maintenance Tax: Teams spend more time repairing automation than learning from it.

- The AI Blind Spot: Modern workflows such as structured triage, test generation, log reasoning, and draft remediation work better when the stack is observable, typed, and automation-friendly.

This is why the migration is strategic, not cosmetic. A slow Java/Selenium estate attached to a TypeScript product organization is not just a tooling mismatch. It is a collaboration mismatch. Developers do not review the tests, platform teams do not trust the signals, and leadership pays for two engineering cultures instead of one.

Playwright helps because it tightens the loop: richer traces, stronger defaults, better ergonomics, and a much cleaner path into the TypeScript ecosystem. But the framework alone does not solve the migration problem. Governance does.

The Migration Minefield

When leadership finally approves a migration, teams usually drift into one of three bad strategies.

| Strategy | Why It Feels Attractive | What Actually Happens | Outcome |

|---|---|---|---|

| Big Bang Rewrite | Clean story, one destination, no hybrid period | Coverage disappears for months while the new suite catches up | Product risk explodes |

| Parallel Maintenance | Keeps legacy protection while the new suite grows | The same people maintain two systems and finish neither | Team burnout and no visible ROI |

| Lift and Shift | Easy to estimate because it looks like translation | Old redundancy, weak assertions, and flaky logic get copied into new tooling | Faster garbage |

| Tetris Doctrine | Less glamorous and more disciplined | One full business block is migrated, stabilized, and decommissioned at a time | Controlled risk with measurable gains |

The Big Bang approach is usually a leadership fantasy. It produces a heroic roadmap slide and then silently creates a coverage gap that nobody can defend in front of a production incident.

Parallel Maintenance is the opposite trap. It sounds responsible because nothing is retired, but it strands the architect in endless legacy firefighting. The business sees cost. It does not see progress.

Lift and Shift is the most deceptive path of all. Teams convince themselves they are modernizing because the language and framework changed. In reality, they ported obsolete tests, duplicated logic, and brittle assumptions into a newer execution engine.

The Tetris Doctrine rejects all three.

What the Tetris Doctrine Actually Means

The doctrine is simple to explain and hard to practice: migrate one complete business module at a time, prove value, then clear that line before moving to the next one.

Not ten percent of login, ten percent of checkout, and ten percent of billing.

One module. End to end. Finished.

That means each migration block includes:

- The Playwright test flows for that domain

- The data setup and fixtures needed to keep it deterministic

- The CI path and reporting required to make failures useful

- The ownership model for who reviews and maintains it

- The retirement plan for the Selenium counterpart

When we work this way, the migration becomes visible to leadership and survivable for the team.

flowchart TD

classDef legacy fill:transparent,stroke:#ef4444,stroke-width:2px;

classDef modern fill:transparent,stroke:#22c55e,stroke-width:2px;

classDef neutral fill:transparent,stroke:#94a3b8,stroke-width:1.5px;

Backlog["Legacy Selenium Estate"]:::legacy

Score["Score Modules By Risk, Value, And Pain"]:::neutral

M1["Migrate Module 1: Billing"]:::modern

R1["Retire Billing Selenium Coverage"]:::legacy

M2["Migrate Module 2: Onboarding"]:::modern

R2["Retire Onboarding Selenium Coverage"]:::legacy

M3["Migrate Module 3: Admin Operations"]:::modern

End["Shrinking Legacy Perimeter"]:::modern

Backlog --> Score --> M1 --> R1 --> M2 --> R2 --> M3 --> End

The doctrine only works if the organization accepts one uncomfortable truth: the legacy suite is no longer a sacred asset. It is a shrinking perimeter. Its job is to protect the parts of the product we have not modernized yet, not to compete forever with the new system.

Establish The Strategic Beachhead

Teams often ask whether they should start with the easiest module. Usually, no.

The first block should be a Strategic Beachhead: a domain that is important enough to matter, complex enough to prove the architecture, and bounded enough to finish.

Good beachhead candidates often include:

- Checkout or subscription billing

- Core onboarding with authentication and setup

- A high-value admin workflow tied to revenue or compliance

Poor beachhead candidates often include:

- A trivial marketing form with low business risk

- A module with no clear owner

- A domain undergoing a redesign so chaotic that any baseline will die immediately

Here is a practical scoring model:

| Module Signal | Why It Matters | High Score Means |

|---|---|---|

| Business Criticality | Leadership pays attention to visible wins | Strong ROI narrative |

| Regression Pain | Existing failures already waste time | Immediate value after migration |

| Integration Complexity | Exercises auth, data, APIs, and UI together | Proves the architecture is real |

| Selenium Maintenance Cost | Quantifies current pain | Easier justification for retirement |

| Shared Ownership Potential | Developers can read and review tests | Breaks the QA silo |

| Observability Readiness | Logs, traces, and fixtures already exist | Faster stabilization |

Pseudo-code is often enough to force the right conversation:

type Module = {

name: string;

businessCriticality: number;

regressionPain: number;

integrationComplexity: number;

seleniumMaintenanceCost: number;

sharedOwnershipPotential: number;

observabilityReadiness: number;

ownerMissing: boolean;

redesignInFlight: boolean;

};

function migrationScore(module: Module) {

let score =

module.businessCriticality * 3 +

module.regressionPain * 3 +

module.integrationComplexity * 2 +

module.seleniumMaintenanceCost * 2 +

module.sharedOwnershipPotential * 2 +

module.observabilityReadiness;

if (module.ownerMissing) score -= 4;

if (module.redesignInFlight) score -= 3;

return score;

}That is more valuable than debating frameworks for three weeks. It turns migration into portfolio management.

Build The Foundation Before Module One

One reason migrations stall is that teams start porting tests before they finish designing the system those tests are supposed to live in.

Before the beachhead module begins, the new Playwright estate should already have a minimum foundation:

| Foundation Element | Why It Must Exist Early |

|---|---|

| Repository conventions | Prevents every engineer from inventing a different style for fixtures, locators, and assertions |

| Deterministic data setup | Stops the first migrated module from becoming flaky due to weak state control |

| Trace and artifact policy | Makes failures diagnosable from day one |

| PR quality gate | Ensures the new framework does not inherit the same chaos it is replacing |

| Reporting and ownership | Gives developers a fast path from failure to action |

| Review standards | Keeps AI-generated or hurried code from slipping into the main branch unchallenged |

This is where many teams underinvest because the work does not look glamorous. But this foundation is what makes the first migrated module believable. Without it, the team may demo a green run once and then spend six weeks arguing about why the suite cannot stay green.

I usually recommend building the first block of the Playwright platform as if it were a product:

- A clear folder strategy

- Shared fixtures with explicit ownership

- Base helpers for navigation, auth, and seeded data

- Mandatory traces on failure

- A small but strict pre-merge gate

That is also why Building a Quality Gate for Your Automation Project remains directly relevant during migration. A modern framework without a disciplined gate is just a faster way to create new debt.

AI Is An Accelerator, Not The Authority

This is where the two research documents you gave me materially sharpen the migration blueprint.

AI is genuinely useful during a Playwright migration, but not because it “writes the tests for us.” Its value is broader and more operational:

- It decomposes vague requirements into concrete test scenarios

- It turns logs, traces, and failure clusters into faster RCA drafts

- It generates edge-case payloads, SQL checks, and structured fixtures

- It helps engineers cross the language and framework gap faster

But it must stay in the correct lane.

| AI Role | Best Use During Migration | What We Must Never Delegate Blindly |

|---|---|---|

| Assistant | Test design, bug report cleanup, SQL drafting, edge-case brainstorming | Final sign-off on business correctness |

| Copilot | Refactoring locators, fixtures, helpers, and page models in IDE context | Silent code acceptance without review |

| Agent | Draft PRs, CI triage, artifact analysis, suggested remediations | Unapproved merges, destructive commands, direct production actions |

This distinction matters because enterprise migration is not only about writing code. It is about controlling risk while the system is in motion.

That is why I prefer the following model:

- AI produces structured proposals

- Humans approve architecture and behavioral intent

- Deterministic quality gates approve whether code is allowed forward

The same principle appears in Building a Quality Gate for Your Automation Project and becomes even more important when we introduce agentic workflows such as the pattern discussed in GitHub Agentic Workflow. The model can accelerate. The gate still decides.

flowchart TD

classDef human fill:transparent,stroke:#3b82f6,stroke-width:2px;

classDef ai fill:transparent,stroke:#8b5cf6,stroke-width:2px;

classDef gate fill:transparent,stroke:#22c55e,stroke-width:2px;

Req["Requirements, PRDs, Legacy Tests"]:::human --> Draft["AI Assistant Generates Scenarios, Data, And Draft Playwright Code"]:::ai

Draft --> Review["Architect Reviews Intent, Scope, And Test Oracle"]:::human

Review --> PR["Draft Pull Request"]:::ai

PR --> Gate["Deterministic Quality Gate<br/>Typecheck + Lint + Playwright + Traces"]:::gate

Gate --> Decision{"Stable Enough To Replace Selenium?"}:::gate

Decision -- "Yes" --> Retire["Retire Matching Selenium Coverage"]:::human

Decision -- "No" --> Triage["Use AI For RCA, Not For Authority"]:::ai

Triage --> Review

This is also where the research guidance on structured outputs, prompt versioning, and human in the loop becomes practical. If AI is helping generate scenarios, SQL, or test code, treat prompts like governed assets:

- Review them

- Version them

- Regression-test them

- Require evidence when they make strong claims

That keeps acceleration from becoming noise.

Four AI Workflows That Actually Save Time

The research materials were especially useful here because they reinforce an important point: AI is most valuable when it compresses repetitive reasoning, not when it replaces engineering judgment.

During a Selenium to Playwright migration, four workflows usually deliver immediate leverage:

| Workflow | Inputs | Useful Output | Guardrail |

|---|---|---|---|

| Requirements To Scenarios | PRD, acceptance criteria, legacy flow notes | Edge cases, negative cases, fixture needs, test oracle candidates | Demand structured output, not marketing prose |

| CI Failure To RCA Draft | Trace, screenshot, logs, diff context | Ranked hypotheses, evidence, likely ownership area | Require “how to verify” before accepting the theory |

| SQL And Data Verification | DB schema, API contract, business rule | Verification queries, payloads, synthetic test data | Never allow destructive SQL by default |

| Coverage Diff Review | Legacy Selenium flow, new Playwright implementation | What is preserved, improved, deleted, or still missing | Human signs off on business equivalence |

The key is to force AI into a shaped interface. For example, when decomposing a module into Playwright scenarios, I would rather receive JSON like this than a three-page essay:

{

"module": "Billing",

"happy_paths": [],

"negative_paths": [],

"edge_cases": [],

"required_fixtures": [],

"required_test_data": [],

"business_oracles": [],

"open_questions": []

}Why does this matter? Because migrations die from ambiguity. A vague AI answer feels smart, but it does not help a team decide what to build, what to delete, or what still needs a product answer.

The same applies to RCA. If a nightly run fails during the migration, a helpful AI result is not “looks like a timeout.” A helpful result is:

- The likely failure cluster

- The strongest two hypotheses

- The exact artifact or trace event supporting each hypothesis

- The fastest verification step for a human reviewer

That is a workflow enhancement. It is not autonomous truth.

The strongest teams also use AI to accelerate boring but high-signal work during migration:

- Generating synthetic test records for risky boundary cases

- Translating legacy business rules into new fixture contracts

- Drafting SQL checks for post-action verification

- Converting noisy defect reports into reproducible bug tickets

Used correctly, these workflows remove friction from the migration. Used lazily, they just generate faster ambiguity.

Analyze, Optimize, Migrate

The Tetris Doctrine is not “rewrite everything later.” It is analyze, optimize, migrate.

1. Analyze

Start by inventorying the real value of the Selenium estate:

- Which tests actually catch meaningful regressions?

- Which suites fail often but teach us nothing?

- Which flows are duplicated across UI, API, and lower layers?

- Which failures are locator problems versus product problems?

This is where AI can help with log clustering, failure categorization, and identifying repeated assertions or stale business flows. It is useful as an analytical partner, especially when the suite is too large for manual reasoning.

2. Optimize

Do not port garbage.

Delete or redesign:

- Tests for dead features

- Redundant happy-path copies

- UI checks that belong at the API or service layer

- Assertions that only confirm navigation, not business outcomes

Good migrations often reduce the overall test count while improving trust. That is not a paradox. It is architecture.

3. Migrate

Only after the suite is trimmed do we move the surviving scenarios into the new Playwright system, with:

- Clear fixtures

- Stable data strategy

- Useful traces and artifacts

- Better failure semantics

- Shared review from developers, not QA alone

If your team wants a fast rule, use this one:

| If A Selenium Test Is… | Do This |

|---|---|

| Business critical and noisy | Re-architect and migrate |

| Low-value and expensive | Delete it |

| Needed temporarily but not worth porting | Keep manual coverage for a bounded period |

| Valuable but better suited below UI | Replace with API or contract tests |

The Balancing Act During Transition

The hardest part of the migration is not code. It is operational discipline while two worlds coexist.

I usually impose five rules.

Rule 1: The Architect’s Mandate

The lead architect should spend their primary energy on the new system: framework conventions, CI, data strategy, reporting, and review quality. If that person becomes the emergency repair desk for Selenium every day, the migration has already slowed down.

Rule 2: No New Selenium

Once the migration starts, no new feature automation is added to Selenium. If a feature lands in a module that has not yet migrated, we accept a temporary manual bridge or lower-level automated coverage where feasible. We do not deepen the legacy hole.

Rule 3: Strategic Deprecation

When a legacy test breaks, do not ask only “How do we fix it?” Ask “Should this still exist?” Sometimes the correct move is to retire it immediately and absorb short-term manual verification rather than feed more engineering time into a dying asset.

Rule 4: Prompt-As-Code

If the team uses AI repeatedly for scenario generation, RCA, or SQL verification, maintain prompt libraries with owners, reviews, and expected outputs. The research you shared is right on this point: once prompts influence engineering decisions, they deserve version control and regression discipline.

Rule 5: Evidence Over Vibes

AI-generated migration suggestions must include:

- What changed

- Why the change is safe

- What evidence supports it

- How to verify it

That one rule eliminates a large percentage of seductive nonsense.

A 90-Day Phase 1 Blueprint

The first migration phase should be concrete enough that leadership can inspect progress without learning testing theory.

| Window | Goal | Expected Output |

|---|---|---|

| Weeks 1-2 | Inventory, score modules, choose beachhead | Migration map and kill-list for low-value Selenium coverage |

| Weeks 3-4 | Build Playwright foundation | Project structure, fixtures, reporting, CI quality gate, trace policy |

| Weeks 5-8 | Migrate the beachhead module | End-to-end Playwright coverage for one critical business domain |

| Weeks 9-10 | Stabilize and tighten governance | Flake triage, data cleanup, prompt library, review standards |

| Weeks 11-12 | Retire duplicate legacy coverage and report ROI | Selenium decommission for that module and a measured business summary |

This is where a lot of migrations become persuasive. Leadership stops hearing “framework progress” and starts hearing:

- Runtime down

- Flake rate down

- Root-cause time down

- Developer participation up

- Legacy perimeter smaller

That is the language that gets the second and third modules funded.

The KPIs That Actually Matter

Do not measure success by lines translated or test count created. That is vanity.

Measure the first block with business-facing and engineering-facing indicators:

| KPI | Healthy Phase 1 Signal |

|---|---|

| Pass Rate | At least 95% deterministic stability on repeated runs |

| Runtime | 30% or more reduction for the migrated module |

| Flake Rate | Down sharply versus Selenium baseline |

| Time To RCA | Failures explain themselves faster through traces and structured artifacts |

| Developer Participation | Feature-team engineers review and merge Playwright PRs |

| Legacy Retirement | Matching Selenium coverage for the module is formally removed |

If you can present these six signals after the first block, the migration is no longer theoretical.

What Most Teams Still Miss

Even smart teams miss a few things.

- AI Does Not Create The Test Oracle For You: It helps generate assertions. It does not define business truth.

- Self-Healing Does Not Remove Ownership: It can reduce locator pain, but it does not replace triage policy.

- Security Changes Once AI Can Act: If the model can call tools, prompt injection and insecure output handling move from theory to architecture.

- Data Hygiene Is A Migration Dependency: Garbage logs, weak naming, and inconsistent fixtures reduce both automation quality and AI usefulness.

This is why I keep pointing teams back to controlled interfaces, evidence-backed RCA, and deterministic execution. The migration should modernize the automation estate, but it should also modernize the team’s standards.

If you want the next layer of this conversation, the bridge into AI-native execution and tool economics lives in The Token War: Why Playwright CLI Defeats MCP in AI-Driven Test Automation and WebMCP: The Missing Control Plane Between Agentic AI and Deterministic Test Automation. Those articles focus on control surfaces. This one focuses on migration operations.

Conclusion: Block By Block, Not Myth By Myth

The Tetris Doctrine works because it converts migration from a faith-based rewrite into a sequence of governed wins.

We choose one business block. We analyze what is worth keeping. We migrate only what deserves to survive. We use AI to accelerate the work without handing it authority. We prove ROI. Then we clear the line and move to the next block.

That is how enterprise migration becomes believable.

If you implement it lazily, Playwright becomes a faster way to carry legacy confusion into a newer stack.

The goal is not to modernize the repository on paper. The goal is to retire risk without creating new chaos.

Architecture > Magic.